- Research

- Open access

- Published:

Controllability results for impulsive mixed-type functional integro-differential evolution equations with nonlocal conditions

Fixed Point Theory and Applications volume 2013, Article number: 66 (2013)

Abstract

In this paper, we establish the controllability for a class of abstract impulsive mixed-type functional integro-differential equations with finite delay in a Banach space. Some sufficient conditions for controllability are obtained by using the Mönch fixed point theorem via measures of noncompactness and semigroup theory. Particularly, we do not assume the compactness of the evolution system. An example is given to illustrate the effectiveness of our results.

MSC:93B05, 34A37, 34G20.

1 Introduction

In recent years, the theory of impulsive differential equations has provided a natural framework for mathematical modeling of many real world phenomena, namely in control, biological and medical domains. In these models, the investigated simulating processes and phenomena are subjected to certain perturbations whose duration is negligible in comparison with the total duration of the process. Such perturbations can be reasonably well approximated as being instantaneous changes of state, or in the form of impulses. These processes tend to be more suitably modeled by impulsive differential equations, which allow for discontinuities in the evolution of the state. For more details on this theory and its applications, we refer to the monographs of Bainov and Simeonov [1], Lakshmikantham et al.[2] and Samoilenko and Perestyuk [3] and the papers of [4–12].

On the other hand, the concept of controllability is of great importance in mathematical control theory. The problem of controllability is to show the existence of a control function, which steers the solution of the system from its initial state to the final state, where the initial and final states may vary over the entire space. Many authors have studied the controllability of nonlinear systems with and without impulses; see, for instance, [13–18]. In recent years, significant progress has been made in the controllability of linear and nonlinear deterministic systems [14, 16, 19–24], and the nonlocal initial condition, in many cases, has a much better effect in applications than the traditional initial condition. As remarked by Byszewski and Lakshmikantham (see [25, 26]), the nonlocal initial value problems can be more useful than the standard initial value problems to describe many physical phenomena.

The study of Volterra-Fredholm integro-differential equations plays an important role in abstract formulation of many initial, boundary value problems of perturbed differential partial integro-differential equations. Recently, many authors studied mixed type integro-differential systems without (or with) delay conditions [27–31]. In [16] the controllability of impulsive functional differential systems with nonlocal conditions was studied by using the measures of noncompactness and the Mon̈ch fixed point theorem, and some sufficient conditions for controllability were established. Here, without assuming the compactness of the evolution system, [29] establishes the existence, uniqueness and continuous dependence of mild solutions for nonlinear mixed type integro-differential equations with finite delay and nonlocal conditions. The results are obtained by using the Banach fixed point theorem and semigroup theory.

More recently, Shengli Xie [31] derived the existence of mild solutions for the nonlinear mixed-type integro-differential functional evolution equations with nonlocal conditions, and the results were achieved by using the Mon̈ch fixed point theorem and fixed point theory. Here some restricted conditions on a priori estimates and measures of noncompactness estimation were not used even if the generator .

To the best of our knowledge, up to now no work has reported on controllability of an impulsive mixed Volterra-Fredholm functional integro-differential evolution differential system with finite delay, and nonlocal conditions has been an untreated topic in the literature, and this fact is the main aim of the present work.

This paper is motivated by the recent works [16, 29, 31] and its main purpose is to establish sufficient conditions for the controllability of the impulsive mixed-type functional integro-differential system with finite delay and nonlocal conditions of the form

where is a family of linear operators which generates an evolution system . The state variable takes the values in the real Banach space X with the norm . The control function is given in , a Banach space of admissible control functions with V as a Banach space, and thereby . B is a bounded linear operator from V into X. The nonlinear operators , and are continuous, where = { is continuous everywhere except for a finite number of points at which and exist and }; , , are impulsive functions, , is the jump of a function ξ at , defined by .

For any function and any , denotes the function in defined by

where is defined in Section 2. Here represents the history of the state from the time up to the present time t.

Our work is organized as follows. In the next section, fundamental notions and facts related to MNC are recalled. Section 3 is devoted to analyzing controllability results of the problem (1.1)-(1.3). Section 4 contains an illustrative example.

2 Preliminaries

In this section, we recalled some fundamental definitions and lemmas which are required to demonstrate our main results (see [20–24, 32–35]).

Let be the space of X-valued Bochner integrable functions on with the norm . In order to define the solution of the problem (1.1)-(1.3), we consider the following space: = { such that is continuous except for a finite number of points at which and exist and }.

It is easy to verify that is a Banach space with the norm

For our convenience, let and ; , .

Definition 2.1 Let be a positive cone of an order Banach space . A function Φ defined on the set of all bounded subsets of the Banach space X with values in is called a measure of noncompactness (MNC) on X if for all bounded subsets , where stands for the closed convex hull of Ω.

The MNC Φ is said to be

-

(1)

Monotone if for all bounded subsets , of X we have ;

-

(2)

Nonsingular if for every , ;

-

(3)

Regular if if and only if Ω is relatively compact in X.

One of the many examples of MNC is the noncompactness measure of Hausdorff β defined on each bounded subset Ω of X by

It is well known that MNC β verifies the above properties and other properties; see [32, 33] for all bounded subsets Ω, , of X,

-

(4)

, where ;

-

(5)

;

-

(6)

for any ;

-

(7)

If the map is Lipschitz continuous with a constant k, then for any bounded subset , where Z is a Banach space.

Definition 2.2 A two-parameter family of bounded linear operators , , on X is called an evolution system if the following two conditions are satisfied:

-

(i)

, for ;

-

(ii)

is strongly continuous for .

Since the evolution system is strongly continuous on the compact operator set , there exists such that for any . More details about the evolution system can be found in Pazy [34].

Definition 2.3 A function is said to be a mild solution of the system (1.1)-(1.3) if on , , , the restriction of to the interval () is continuous and the following integral equation is satisfied.

Definition 2.4 The system (1.1)-(1.3) is said to be nonlocally controllable on the interval J if, for every initial function and , there exists a control such that the mild solution of (1.1)-(1.3) satisfies .

Definition 2.5 A countable set is said to be semicompact if the sequence is relatively compact in X for almost all , and if there is a function satisfying for a.e. .

Lemma 2.1 (See [32])

Ifis bounded and equicontinuous, thenis continuous forand

Lemma 2.2 (See [12])

Ifis bounded and piecewise equicontinuous on, thenis piecewise continuous forand

Lemma 2.3 (See [19])

Letbe a sequence of functions in. Assume that there existsatisfyinganda.e. , then for all, we have

Lemma 2.4 (See [19])

Let. Ifis semicompact, then the setis relatively compact in. Moreover, if, then for all,

The following fixed-point theorem, a nonlinear alternative of Mon̈ch type, plays a key role in our proof of controllability of the system (1.1)-(1.3).

Lemma 2.5 (See [[35], Theorem 2.2])

Let D be a closed convex subset of a Banach space X and. Assume thatis a continuous map which satisfies Mon̈ch’s condition, that is, (is countable, is compact). Then F has a fixed point in D.

3 Controllability results

In this section, we present and demonstrate the controllability results for the problem (1.1)-(1.3). In order to demonstrate the main theorem of this section, we list the following hypotheses.

-

(H1)

is a family of linear operators, , not depending on t and a dense subset of X, generating an equicontinuous evolution system , i.e., is equicontinous for and for all bounded subsets B and .

-

(H2)

The function satisfies the following:

-

(i)

For , the function is continuous, and for all , the function is strongly measurable.

-

(ii)

For every positive integer , there exists such that

and

-

(iii)

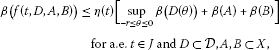

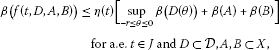

There exists an integrable function such that

where .

-

(i)

-

(H3)

The function satisfies the following:

-

(i)

For each , the function is continuous, and for each , the function is strongly measurable.

-

(ii)

There exists a function such that

-

(iii)

There exists an integrable function such that

and , where and β is the Hausdorff MNC.

For convenience, let us take and .

-

(i)

-

(H4)

The function satisfies the following:

-

(i)

For each , the function is continuous, and for each , the function is strongly measurable.

-

(ii)

There exists a function such that

-

(iii)

There exists an integrable function such that

and , where .

For convenience, let us take and .

-

(i)

-

(H5)

is a continuous compact operator such that

-

(H6)

The linear operator is defined by

-

(i)

W has an invertible operator which takes values in , and there exist positive constants and such that

-

(ii)

There is such that, for every bounded set ,

-

(i)

-

(H7)

, , is a continuous operator such that

-

(i)

There are nondecreasing functions such that

and

-

(ii)

There exist constants such that

for every bounded subset S of .

-

(i)

-

(H8)

The following estimation holds true:

Theorem 3.1 Assume that the hypotheses (H1)-(H8) are satisfied. Then the impulsive differential system (1.1)-(1.3) is controllable on J provided that

Proof Using the hypothesis (H6)(i), for every , define the control

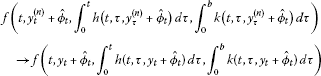

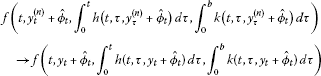

We shall now show that when using this control, the operator defined by

has a fixed point. This fixed point is then a solution of (1.1)-(1.3). Clearly, , which implies the system (1.1)-(1.3) is controllable. We rewrite the problem (1.1)-(1.3) as follows.

For , we define by

Then . Let , . It is easy to see that y satisfies and

where

if and only if x satisfies

and , . Define . Let be an operator defined by

Obviously, the operator F has a fixed point is equivalent to G has one. So, it turns out to prove G has a fixed point. Let , where

Step 1: There exists a positive number such that , where .

Suppose the contrary. Then for each positive integer q, there exists a function but , i.e., for some .

We have from (H1)-(H7)

Since

where, and , we have

where

Hence by (3.5)

where is independent of q and .

Dividing both sides by q and noting that as , we obtain

Thus we have

This contradicts (3.1). Hence, for some positive number q, .

Step 2: is continuous.

Let with in . Then there is a number such that for all n and , so and .

From (H2) and (H5) we have

-

(i)

and

(ii) , .

Then we have

and

where

Observing (3.7)-(3.9), by the dominated convergence theorem, we have that

That is, G is continuous.

Step 3: G is equicontinuous on every , . That is, is piecewise equicontinuous on J.

Indeed, for , and , we deduce that

By the equicontinuity of and the absolute continuity of the Lebesgue integral, we can see that the right-hand side of (3.10) tends to zero and is independent of y as . Hence is equicontinuous on ().

Step 4: Mon̈ch’s condition holds.

Suppose is countable and . We shall show that , where β is the Hausdorff MNC.

Without loss of generality, we may assume that . Since G maps into an equicontinuous family, is equicontinuous on . Hence is also equicontinuous on every .

By (H7)(ii) we have

By Lemma 2.3 and from (H3)(iii), (H4)(iii), (H6)(ii) and (H7)(ii), we have that

This implies that

From (3.11) and (3.13) we obtain that

for each .

Since W and are equicontinuous on every , according to Lemma 2.2, the inequality (3.14) implies that

That is, , where N is defined in (H8). Thus, from Mon̈ch’s condition, we get that

since , which implies that . So, we have that W is relatively compact in . In the view of Lemma 2.5, i.e., Mon̈ch’s fixed point theorem, we conclude that G has a fixed point y in W. Then is a fixed point of F in , and thus the system (1.1)-(1.3) is nonlocally controllable on the interval . This completes the proof. □

Here we must remark that the conditions (H1)-(H8) given above are at least sufficient, because it is an open problem to prove that they are also necessary or to find an example which points out clearly that the mentioned conditions are not necessary to get the main result proved in this section.

4 An example

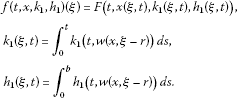

Consider the partial functional integro-differential systems with impulsive conditions of the form

where , , , = {, ψ is continuous everywhere except for a countable number of points at which , exist with }, , , , .

Let and be defined by with the domain . It is well known that A is an infinitesimal generator of a semigroup defined by for each . is not a compact semigroup on X and , where β is the Hausdorff MNC. We also define the bounded linear control operator by

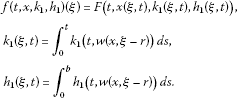

We assume that

-

(1)

is a continuous function defined by

We take , is a constant. F is Lipschitz continuous for the second variable. Then f satisfies the hypotheses (H2) and (H3) of Section 3.

-

(2)

is a continuous function for each defined by

We take , , , for each . Then is compact and satisfies the hypothesis (H6)(i).

-

(3)

is a continuous function defined by

with . Then g is a compact operator and satisfies the hypothesis (H5).

Therefore, the above partial differential system (4.1)-(4.4) can be written to the abstract form (1.1)-(1.3) and all conditions of Theorem 3.1 are satisfied. We can conclude that the system (4.1)-(4.4) is nonlocally controllable on the interval J.

Conclusions

In the current paper, we are focused on finding some sufficient conditions to establish controllability results for a class of impulsive mixed-type functional integro-differential equations with finite delay. The proof of the main theorem is based on the application of the Mon̈ch fixed point theorem with a noncompact condition of the evolution system. An example is also included to illustrate the technique.

References

Bainov DD, Simeonov PS: Impulsive Differential Equations: Periodic Solutions and Applications. Longman, Harlow; 1993.

Lakshmikantham V, Bainov DD, Simeonov PS: Theory of Impulsive Differential Equations. World Scientific, Singapore; 1989.

Samoilenko AM, Perestyuk NA: Impulsive Differential Equations. World Scientific, Singapore; 1995.

Balachandran K, Annapoorani N: Existence results for impulsive neutral evolution integrodifferential equations with infinite delay. Nonlinear Anal. 2009, 3: 674–684.

Benchohra M, Henderson J, Ntouyas SK: Existence results for impulsive multivalued semilinear neutral functional inclusions in Banach spaces. J. Math. Anal. Appl. 2001, 263: 763–780. 10.1006/jmaa.2001.7663

Fan Z: Impulsive problems for semilinear differential equations with nonlocal conditions. Nonlinear Anal. 2010, 72: 1104–1109. 10.1016/j.na.2009.07.049

Hernandez E, Rabello M, Henriquez H: Existence of solutions for impulsive partial neutral functional differential equations. J. Math. Anal. Appl. 2007, 331: 1135–1158. 10.1016/j.jmaa.2006.09.043

Ji S, Li G: Existence results for impulsive differential inclusions with nonlocal conditions. Comput. Math. Appl. 2011, 62: 1908–1915. 10.1016/j.camwa.2011.06.034

Sivasankaran S, Mallika Arjunan M, Vijayakumar V: Existence of global solutions for second order impulsive abstract partial differential equations. Nonlinear Anal. TMA 2011,74(17):6747–6757. 10.1016/j.na.2011.06.054

Vijayakumar V, Sivasankaran S, Mallika Arjunan M: Existence of global solutions for second order impulsive abstract functional integrodifferential equations. Dyn. Contin. Discrete Impuls. Syst. 2011, 18: 747–766.

Vijayakumar V, Sivasankaran S, Mallika Arjunan M: Existence of solutions for second-order impulsive neutral functional integro-differential equations with infinite delay. Nonlinear Stud. 2012,19(2):327–343.

Ye R: Existence of solutions for impulsive partial neutral functional differential equation with infinite delay. Nonlinear Anal. 2010, 73: 155–162. 10.1016/j.na.2010.03.008

Chang YK, Anguraj A, Mallika Arjunan M: Controllability of impulsive neutral functional differential inclusions with infinite delay in Banach spaces. Chaos Solitons Fractals 2009,39(4):1864–1876. 10.1016/j.chaos.2007.06.119

Chen L, Li G: Approximate controllability of impulsive differential equations with nonlocal conditions. Int. J. Nonlinear Sci. 2010, 10: 438–446.

Guo M, Xue X, Li R: Controllability of impulsive evolution inclusions with nonlocal conditions. J. Optim. Theory Appl. 2004, 120: 355–374.

Ji S, Li G, Wang M: Controllability of impulsive differential systems with nonlocal conditions. Appl. Math. Comput. 2011, 217: 6981–6989. 10.1016/j.amc.2011.01.107

Li M, Wang M, Zhang F: Controllability of impulsive functional differential systems in Banach spaces. Chaos Solitons Fractals 2006, 29: 175–181. 10.1016/j.chaos.2005.08.041

Selvi S, Mallika Arjunan M: Controllability results for impulsive differential systems with finite delay. J. Nonlinear Sci. Appl. 2012, 5: 206–219.

Obukhovski V, Zecca P: Controllability for systems governed by semilinear differential inclusions in a Banach space with a noncompact semigroup. Nonlinear Anal. 2009, 70: 3424–3436. 10.1016/j.na.2008.05.009

Klamka J: Schauders fixed-point theorem in nonlinear controllability problems. Control Cybern. 2000,29(1):153–165.

Klamka J: Constrained approximate controllability. IEEE Trans. Autom. Control 2000,45(9):1745–1749. 10.1109/9.880640

Klamka J: Constrained controllability of semilinear delayed systems. Bull. Pol. Acad. Sci., Tech. Sci. 2001,49(3):505–515.

Klamka J: Constrained controllability of semilinear systems. Nonlinear Anal. 2001, 47: 2939–2949. 10.1016/S0362-546X(01)00415-1

Klamka J: Constrained exact controllability of semilinear systems. Syst. Control Lett. 2002,4(2):139–147.

Byszewski L: Theorems about existence and uniqueness of solutions of a semi-linear evolution nonlocal Cauchy problem. J. Math. Anal. Appl. 1991, 162: 494–505. 10.1016/0022-247X(91)90164-U

Byszewski L, Lakshmikantham V: Theorem about the existence and uniqueness of a solution of a nonlocal abstract Cauchy problem in a Banach space. Appl. Anal. 1991, 40: 11–19. 10.1080/00036819008839989

Anguraj A, Karthikeyan P, Trujillo JJ: Existence of solutions to fractional mixed integro-differential equations with nonlocal initial condition. Adv. Differ. Equ. 2011. doi:10.1155/2011/690653

Chang YK, Chalishajar DN: Controllability of mixed Volterra-Fredholm type integro-differential inclusions in Banach spaces. J. Franklin Inst. 2008, 345: 499–507. 10.1016/j.jfranklin.2008.02.002

Dhakne MB, Kucche KD: Existence of a mild solution of mixed Volterra-Fredholm functional integro-differential equation with nonlocal condition. Appl. Math. Sci. 2011,5(8):359–366.

Ntouyas SK, Purnaras IK: Existence results for mixed Volterra-Fredholm type neutral functional integro-differential equations in Banach spaces. Nonlinear Stud. 2009,16(2):135–148.

Xie S: Existence of solutions for nonlinear mixed type integro-differential functional evolution equations with nonlocal conditions. Abstr. Appl. Anal. 2012. doi:10.1155/2012/913809

Banas J, Goebel K Lecture Notes in Pure and Applied Mathematics. In Measure of Noncompactness in Banach Spaces. Dekker, New York; 1980.

Kamenskii M, Obukhovskii V, Zecca P: Condensing Multivalued Maps and Semilinear Differential Inclusions in Banach Spaces. De Gruyter, Berlin; 2001.

Pazy A: Semigroups of Linear Operators and Applications to Partial Differential Equations. Springer, New York; 1983.

Mon̈ch H: Boundary value problems for nonlinear ordinary differential equations of second order in Banach spaces. Nonlinear Anal. 1980, 4: 985–999. 10.1016/0362-546X(80)90010-3

Acknowledgements

Dedicated to Professor Hari M Srivastava.

The work is partially supported by project MTM2010-16499 from the Government of Spain.

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contributions

All authors contributed equally to the manuscript and typed, read, and approved the final manuscript.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Machado, J.A., Ravichandran, C., Rivero, M. et al. Controllability results for impulsive mixed-type functional integro-differential evolution equations with nonlocal conditions. Fixed Point Theory Appl 2013, 66 (2013). https://doi.org/10.1186/1687-1812-2013-66

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1687-1812-2013-66